With the current popularity of ChatGPT and the looming development of Artificial General Intelligence (AGI) how is this affecting the English language learner?

The advent of ChatGPT has had a significant impact on language learners worldwide. In addition, there are impactful consequences for both teachers in higher education (HE) contexts that are evaluating learners on the basis of the work they submit being original.

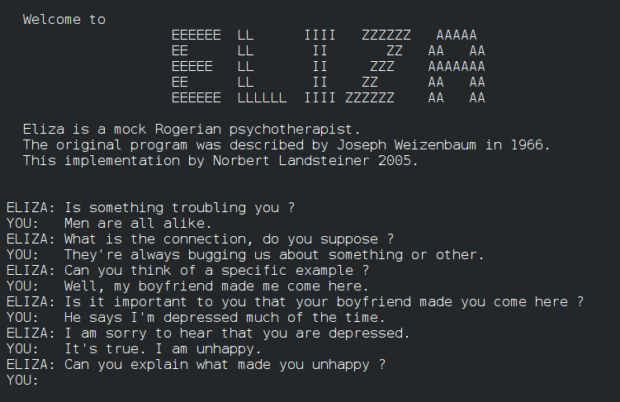

ChatGPT is trained with extensive data trawled from the internet. Note the use of the present tense, because it is constantly being updated and learning from the input that internet users openly share on the worldwide web. We need to think of ChatGPT as a living and growing organism that is constantly gaining information and becoming stronger. It all sounds rather science fiction, and if we think back to many of the Sci-Fi television series from the 80’s, we would be right in thinking so.

ChatGTP will be a year old next month, and it is leaving its AI footprint on diverse industries. This includes pharmaceuticals, wealth management, and bio weaponry. My primary concern is its use in English language learning, more notably academic English in HE contexts because that is one of my areas of expertise.

The problems all begin with academic integrity, or plagiarism as it is more commonly known. HE learners are clearly guided towards using one of the acknowledged citation and referencing systems. For example, they can use The Harvard system to reference all the academic source texts they refer to in their academic writing. This means they to cite ideas AND acknowledge them which will result in their work being 100% original. This also ensures that the criticality of the ideas presented aligns with the citations and a sound piece of academic writing is created. If, however, ChatGPT is used, citations may be sound, the writing will be flawless, but the ideas tend to be hollow. This is where students are being caught out.

So, my message here is that while ChatGPT may have many fortitudes, supporting students with academic writing is not one of them in my honest opinion.