A new year AND a new decade, so what does 2020 mean for Ed Tech? Twenty years ago we were getting to grips with communicating via email. Ten years ago iPhones had already been around for three years, but their price bracket pitched them out of reach for the majority of mobile phone users. So here we are in 2020 with driverless car technology being widely tried and tested, and with China witnessing the birth of the third gene-edited baby. So where does this leave language learning and tech, and what is in store for the near future?

Where we are now

Apps, apps, apps… With the 2019 gaming community reaching a population of 2.5 billion globally (statista.com), it is no surprise that apps are an attractive option for learning English. The default options tend to be Babel, Duolingo and Memrise, but there are a plethora of options to choose from. Some recent fun apps I have experimented with are ESLA for pronunciation, TALK for speaking and listening, and EF Hello.

In the classroom however, the digital landscape can be quite different. Low resource contexts and reluctance from teaching professionals to incorporate tech into the learning environment can mean that opportunities for learners to connect with others and seek information are not available. Even is some of the most highly penetrated tech societies 19th century rote based learning and high stakes testing approaches are favoured.

Predictions for the future

Does educational technology have all the answers we need to improve the language output of ESL learners globally? No, probably not. However, society has been so dramatically altered by the impact of technology in almost every facet or our lives, it would be rather odd I feel, to reject it in teaching and learning environments.

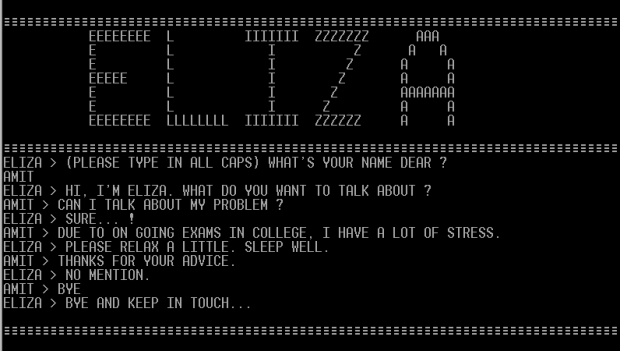

In higher education the main concern is data privacy and ethics with exposure to digital areas such as the cloud. Yet, chatbots are starting to become integrated to support students asking university related FAQ’s. Both Differ and Hubert chatbots are being researched for their potential to improve qualitative student interaction and feedback.

Kat’s predictions

In all honesty I think it is a tough call to gauge where we will be with Ed Tech during the next ten years. Data privacy is a considerable issue when incorporating elements of AI into learning fields. This is not an issue with VR and AR and therefore underpins its relevant proliferation in teaching and learning. I feel that VR and AR will continue to mature and provide a more full-bodied learning experience when using VLEs. This may however be a slightly more complex paradigm than some may be able or prepared to employ.

I still firmly believe that reflective practice is a solid foundation for learners using recorded audio or visual content of their language production. So while this doesn’t mean the introduction of a big pioneering tech tool, it highlights its relevance as a reliable learning tool. In the same way, I continue to use Whatsapp, WeChat and Line to share learning content with learners and encourage them to interact with each other, and other learning communities.