Enthusiasm can be displayed in different ways and it can also be present in a learner, but they do not show any visible signs of being enthusiastic to learn, they are simply enjoying the learning and keen to learn.

My current research investigation focuses on how learners demonstrate their enthusiasm when interacting with a speech recognition interface. This includes both linguistic and non-linguistic features. The dataset I am using clearly demonstrates that the psychological state of learners impacts their enthusiasm, and therefore language output and capacity to engage in learning more than any other factor. While this came as a surprise, it aligns with motivation theory and learning which purports that positive emotional and hence psychological states favour learning, and a negative emotional state (anxiety, stress, depression) can adversely affect learning.

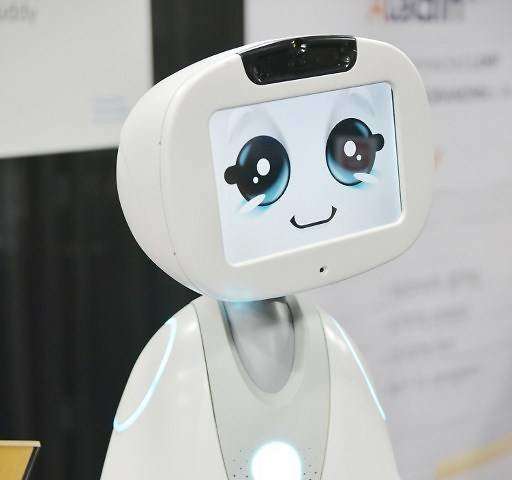

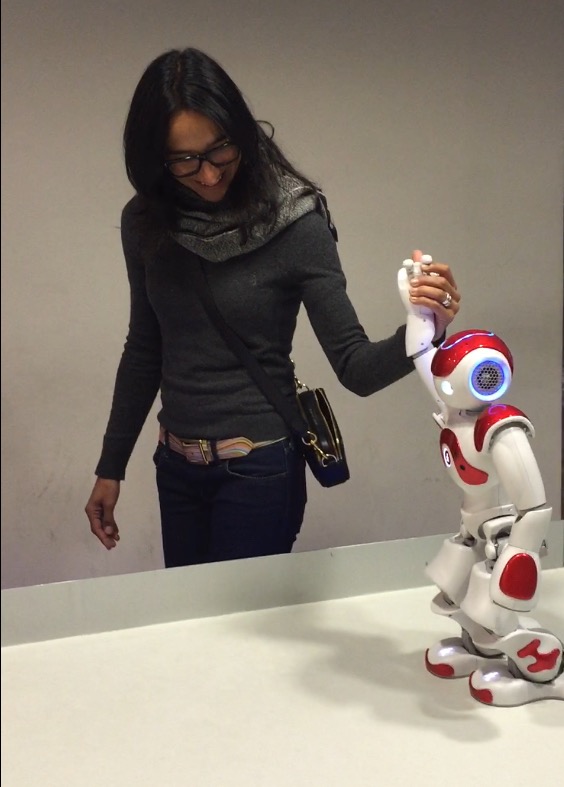

I’ve spent a lot of time with humanoid robots, speech recognition interfaces, and autonomous agents and despite their degree of humanness, there is something decidedly safe for me about interacting with a non-conscious being. Maybe that is why Weizenbaum’s research was so successful! The non-judgmental attributes of a machine make the user feel comfortable to interact, and therefore they get more out of the learning experience. This is something I am still investigating, but Buddy, the robot in the image above aims to understand the mood of the use, and then respond accordingly. So empathy is now going beyond human…