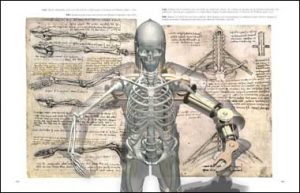

With the surge of interest and investment into AI, the question at the forefront of my mind is ‘What does it mean to be human?’ The apparent obsession with AI is to replicate human intelligence on all levels, but the problem I have with this is that I don’t think we fully understand what it means to be human. I think it is impossible to reproduce human ‘intelligence’ without first appreciating the complexities of the human brain. Hawkins (2004) argues that the primary reason we have been unable to successfully build a machine that thinks exactly like a human, is our lack of knowledge about the complex functioning of cerebral activity, and how the human brain is able to process information without thinking.

This is the reason why the work of Hiroshi Ishiguro, the creator of both Erica and Geminoid, interests me so much. The motivation for Ishiguro to create android robots is to better understand humans, in order to build better robots, which can in turn help humans. I met Erica in 2016 and the experience made me realise that we are in fact perhaps pursing goals of human replication that are unnecessary. Besides, which model of human should be used as the blueprint for androids and humanoid robots? Don’t get me wrong, I am fascinated with Ishiguro’s creation of Erica.

My current research focuses on speech dialogue systems and human computer interaction (HCI) for language learning, which I intend to develop so it can be mapped onto an anthropomorphic robot for the same purposes. Research demonstrates, that one of the specific reasons the use of non-human interactive agents are successful in language learning is because they disinhibit learners and therefore promote interaction, especially amongst those with special educational needs.

The attraction is of humanoid robots and androids for me therefore, is not necessary how representative they are of humans, but more about the affordances of the non-human aspects they have, such as being judgemental. In my opinion, we need more Erica’s in the world.